It Is Not What It Looks Like

Almost every conversation today bends toward artificial intelligence. And in many cases, AI isn’t just the subject of the conversation, it’s also the one participating in it. If Alan Turing were to return, he would probably say we already have superhuman intelligence. By several benchmarks, machines now outperform humans across cognitive tasks that once felt uniquely ours.

But if AI is truly the game changer it’s made out to be, we should already be seeing its effects ripple across the broader economy. Economists have a word for this process: diffusion. Every major technological breakthrough has taken time to diffuse into economic systems. Electricity did. The internet did. Smartphones did. Technologies don’t transform GDP overnight; they spread gradually through firms, industries, and labor markets. Yet none of those prior waves felt as cognitively disruptive as AI does today.

To understand whether diffusion is happening, we have to look at productivity. The cleanest conceptual way to measure productivity is simple: how much work is done in a specified amount of time. At the scale of an entire economy, one rough but useful proxy is GDP divided by the number of people working. In other words, how much output does each worker generate on average? This measure has a limitation. It does not explicitly account for capital or technological investments. It is not a perfect model of total factor productivity. But as a loose measure, it works surprisingly well.

If AI really is the productivity boon we hope it will be, then within the next 6 months to a year we should observe something specific. GDP should rise while unemployment either rises or stays the same. Output growth needs to outpace the number of workers being added to the economy. More economic value produced per worker. So far, we haven’t seen that divergence in any clear or decisive way.

There is also a more immediate test. If you are using AI at work, has your output meaningfully improved? For AI to have a measurable economic impact, you should either be doing more work in your 40 hours or finishing the same workload with more free time in your hands. At scale, one of these effects needs to compound across millions of workers.

Technologically, however, progress has been dramatic. Just a year ago, AI systems struggled with anything beyond beginner-level tasks. Context windows filled up quickly. Multi-step reasoning broke down. Agents were mostly theoretical. Today, models can perform deep research, draft entire project requirements, write production-level code, and operate across long task chains. The conversation has shifted from chat interfaces to autonomous agents capable of extended work on complex objectives.

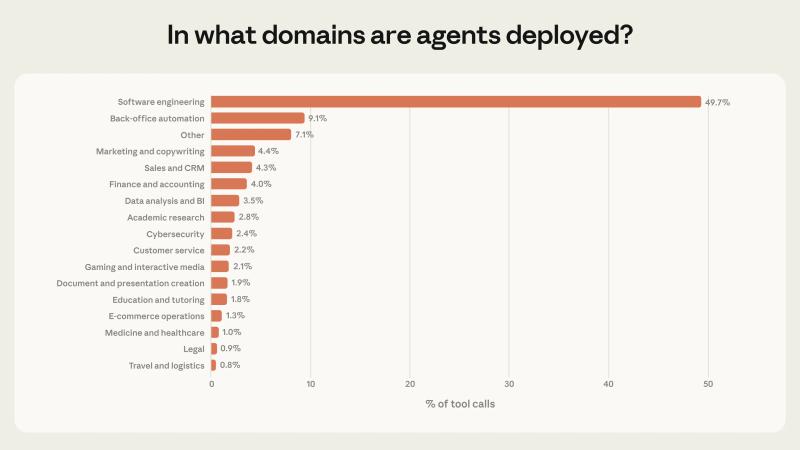

Claude Code is less than a year old and is reportedly one of Anthropic’s fastest products to reach a billion dollars in revenue. That speed is remarkable. Yet most of this agent-driven capability remains concentrated in software.

Software engineering itself represents only around 3–4% of the global workforce, by most estimates. Even if AI were dramatically increasing productivity inside that 3–4%, the effect on total economic output would still be limited. The majority of industries have not meaningfully integrated AI, and many have not even experimented with it. Most usage remains confined to chat interfaces. We have yet to see large-scale autonomous AI work embedded into operational systems. For most users, AI is still closer to a replacement for Google search than a fully autonomous coworker.

Financial markets, meanwhile, have reacted in waves. First, model providers and chip makers such as Microsoft and NVIDIA rallied sharply. Then AI infrastructure stocks followed, including memory manufacturers like Micron Technology and energy companies positioned to supply data centers. Markets tend to price in expectations long before reality catches up. The question is: what exactly are we pricing in?

If AI continues to grow at this scale and begins replacing jobs, that scenario is not automatically positive for the broader economy. If fewer people work in software, then software licensing revenue declines. IT services companies such as Infosys and Tata Consultancy Services have fallen to multi-year lows. At times it feels as though markets are reacting to speculative fiction rather than current economic data. There is a subtle dystopian undertone to some of the optimism.

If AI were truly a magical tool, we should be building more across every sector, not just generating code faster. In theory, this would lead to a world of abundance. But in a world of abundance, does money retain the same value? Cities built around technology could see population declines. Real estate markets in those hubs could crash. Even incremental feature releases from Anthropic have, over the past week, triggered sell-offs in adjacent sectors. Even Jensen Huang, is of the opinion that Salesforce and ServiceNow won’t go anywhere, they might just be used differently.

The economics beneath the surface are equally complex. AI is expensive. Tokens are largely subsidized by venture capital funding at the moment. Free cash flow across major players is being redirected into building data centers with the goal of doubling compute capacity in record time. Once funding dries up and investors begin demanding returns, one of two things must happen: either the technology becomes cheap enough to sustain itself, or costs get passed down to users.

GPUs introduce another constraint. At maximum performance, they have roughly 3 years of useful AI training life before being repurposed primarily for inference. Even if you secure compute capacity, you still need to secure hardware supply. We have already seen companies like Micron Technology shut down certain consumer memory lines because enterprise memory carries much higher margins.

The short-term economics of AI form a tight loop. You need enormous capital to train a frontier model. You can make more than 50% margins on inference. But to remain competitive, you must reinvest nearly all of it back into training and development. There are only so many cycles of this reinvestment treadmill before returns are tested. Data centers themselves are capital-intensive. Land is expensive. Electricity is not cheap. Cooling systems consume vast quantities of water.

The risk underlying everything is prediction. Capital is being allocated based on what AI is expected to become. If those goals are not met, the structure collapses. But if AGI were truly imminent, wouldn’t it make sense for every major player to lock in as much compute as possible for the foreseeable future? Dario believes that we are “At the end of the exponential”.

Consider Oracle Corporation. Its stock rallied 40% on partnership news with OpenAI, only to later fall more than 50%. Concerns about its ability to fund AI-related spending commitments, and its reliance on a loss-making OpenAI for future revenue, triggered a sell-off. Around the same time, Google, a firm generating roughly 70 billion dollars in free cash flow, raised a billion dollars through a 100-year bond. A company with that scale of internal funding capacity still chose to issue debt that expires in a century.

Technologically, we may already be operating at superhuman levels in narrow domains. Economically, however, the diffusion remains constrained. AI touches 3–4% of the workforce meaningfully. Productivity gains at the macro level are not yet clearly visible. In the next 6 months to a year, the data will matter more than the demos. If GDP begins to outpace labor growth decisively, the productivity story strengthens. If not, then the hype cycle may prove faster than the diffusion cycle.

Here are some questions I've begun to ask myself, am I solving harder problems? Could AI do this over time? Am I outsourcing my thinking? Could I possibly set up agents to work on this problem right now? How much context do I need to solve any problem outside of my world view with the help of AI?

In conclusion, AI is here and helpful, but its path into business integration is somewhat still far from optimum. Not very far, perhaps. Maybe, everything isn’t priced in after all.